- Introduction

- Linux Administration Series

- Virtualization Fundamentals

- QEMU and KVM Architecture

- Installing KVM and QEMU

- Creating Virtual Machines with QEMU

- Managing VMs with libvirt and virsh

- Virtual Networking

- Virtual Machine Storage Management

- Live Migration

- LXC Containers

- Production Best Practices

- Troubleshooting

- Frequently Asked Questions

- Conclusion

Introduction

Virtualization is a cornerstone of modern infrastructure, enabling efficient resource utilization, workload isolation, and flexible deployment strategies. This comprehensive guide covers Linux virtualization technologies: QEMU/KVM for full virtualization, libvirt for VM management, and LXC for lightweight containerization.

We'll explore hypervisor architectures, virtual machine creation and management, storage configurations, network setup, live migration, and container isolation. Each section includes practical examples, architectural diagrams, and production best practices.

Linux Administration Series

📚 View Complete Linux Administration Guide - Master all 7 parts with our comprehensive learning path.

This is Part VI of our comprehensive 7-part Linux administration guide:

- Part I: File System & Process Management

- Part II: User Authentication & LDAP

- Part III: UFW Firewall & Networking

- Part IV: systemd & SSH Hardening

- Part V: Postfix Email Server

- Part VI: QEMU KVM Virtualization (Current Article)

- Part VII: LVM & RAID Storage

Virtualization Fundamentals

Virtualization Types and Architecture

Virtualization technologies:

-

Full Virtualization (KVM/QEMU):

- Complete hardware emulation

- Guest OS runs unmodified

- Hardware-assisted virtualization (Intel VT-x, AMD-V)

- QEMU provides device emulation

- KVM provides kernel-level acceleration

-

Paravirtualization (Xen):

- Guest OS modified to be hypervisor-aware

- Direct communication with hardware

- Better performance than full emulation

- Requires guest OS modifications

-

Containerization (LXC/Docker):

- OS-level virtualization

- Shares host kernel

- Lightweight and fast startup

- Process and resource isolation

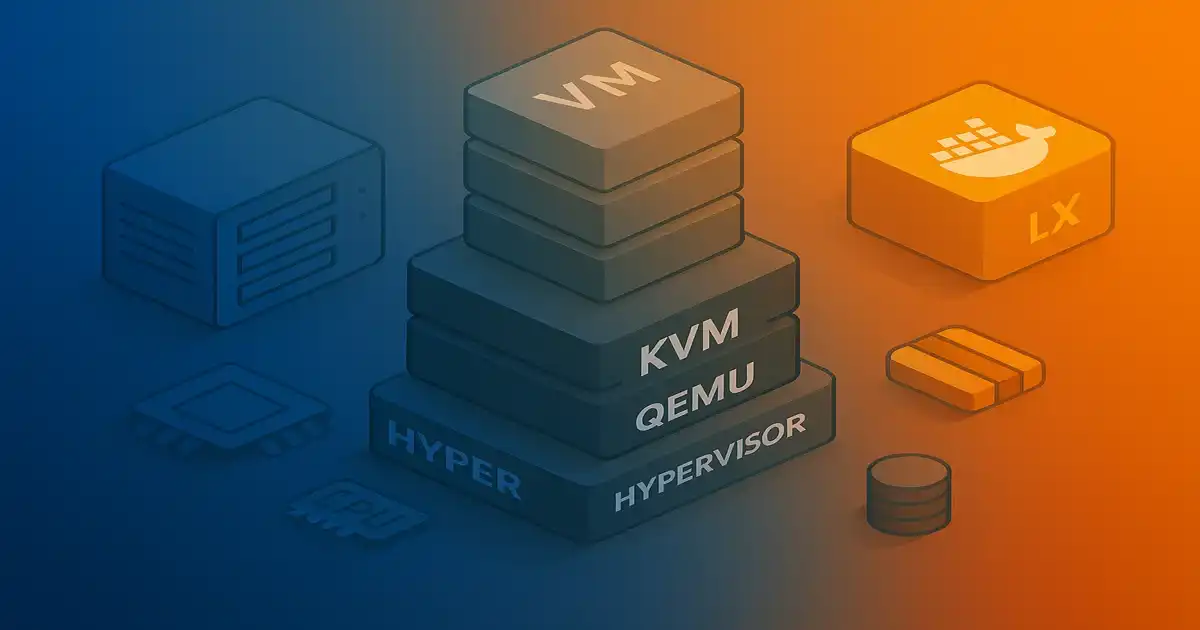

QEMU and KVM Architecture

QEMU/KVM Stack

Key components:

- KVM (Kernel-based Virtual Machine): Linux kernel module providing CPU and memory virtualization

- QEMU (Quick Emulator): User-space program emulating hardware devices

- VirtIO: Paravirtualized device drivers for optimal performance

- libvirt: Virtualization management API and daemon

Installing KVM and QEMU

System Requirements and Installation

Check CPU virtualization support:

# Check for Intel VT-x or AMD-V (KVM documentation: https://www.linux-kvm.org/page/Documents)

egrep -c '(vmx|svm)' /proc/cpuinfo

# Non-zero output means virtualization is supported

# Check if KVM is available

lsmod | grep kvm

# Expected output:

# kvm_intel (Intel) or kvm_amd (AMD)

# kvm

Install KVM and QEMU:

# Update package list

sudo apt update

# Install QEMU, KVM, and utilities

sudo apt install qemu-kvm qemu-system qemu-utils

# Install additional tools

sudo apt install libvirt-daemon-system libvirt-clients bridge-utils

# Add user to necessary groups

sudo usermod -aG kvm $USER

sudo usermod -aG libvirt $USER

# Reboot or re-login for group changes to take effect

newgrp kvm

newgrp libvirt

# Verify installation

virsh --version

qemu-system-x86_64 --version

# Check KVM acceleration

kvm-ok

# Expected: "KVM acceleration can be used"

Creating Virtual Machines with QEMU

Disk Image Formats

Create disk images:

# Create QCOW2 image (recommended for most use cases) - QEMU documentation: https://www.qemu.org/documentation/

qemu-img create -f qcow2 ubuntu-vm.qcow2 60G

# Create raw image (better performance, no features)

qemu-img create -f raw ubuntu-vm.raw 60G

# View image information

qemu-img info ubuntu-vm.qcow2

# Convert between formats

qemu-img convert -f raw -O qcow2 source.raw destination.qcow2

# Resize image

qemu-img resize ubuntu-vm.qcow2 +20G

# Create snapshot-enabled image

qemu-img create -f qcow2 -o backing_file=base-image.qcow2 snapshot1.qcow2

Creating and Running Virtual Machines

Basic VM creation:

# Download Ubuntu ISO (example)

wget https://releases.ubuntu.com/22.04/ubuntu-22.04.3-live-server-amd64.iso

# Create disk image

qemu-img create -f qcow2 ubuntu-server.qcow2 40G

# Boot VM from ISO (installation)

qemu-system-x86_64 \

-enable-kvm \

-m 4G \

-cpu host \

-smp cores=2,threads=1 \

-cdrom ubuntu-22.04.3-live-server-amd64.iso \

-drive file=ubuntu-server.qcow2,format=qcow2 \

-boot d \

-vnc :1

# Boot installed VM

qemu-system-x86_64 \

-enable-kvm \

-m 4G \

-cpu host \

-smp cores=2,threads=1 \

-drive file=ubuntu-server.qcow2,format=qcow2,if=virtio \

-net nic,model=virtio \

-net user,hostfwd=tcp::2222-:22 \

-display sdl

QEMU command options explained:

-enable-kvm: Use KVM acceleration-m 4G: Allocate 4GB RAM-cpu host: Use host CPU model (best performance)-smp cores=2,threads=1: 2 CPU cores-drive file=...,if=virtio: VirtIO disk (paravirtualized)-net nic,model=virtio: VirtIO network card-net user,hostfwd=tcp::2222-:22: Port forwarding (host:2222 → guest:22)-vnc :1: VNC server on display :1 (port 5901)-display sdl: Use SDL graphical display

Advanced VM configurations:

# VM with multiple disks

qemu-system-x86_64 \

-enable-kvm \

-m 8G \

-cpu host \

-smp 4 \

-drive file=system.qcow2,format=qcow2,if=virtio \

-drive file=data.qcow2,format=qcow2,if=virtio \

-net nic,model=virtio \

-net bridge,br=br0

# VM with USB passthrough

qemu-system-x86_64 \

-enable-kvm \

-m 4G \

-usb \

-device usb-host,vendorid=0x1234,productid=0x5678

# VM with PCI passthrough (GPU)

qemu-system-x86_64 \

-enable-kvm \

-m 8G \

-device vfio-pci,host=01:00.0

Managing VMs with libvirt and virsh

libvirt Architecture

Install libvirt and virt-manager:

# Install complete virtualization stack (libvirt documentation: https://libvirt.org/docs.html)

sudo apt install virt-manager libvirt-daemon-system libvirt-clients bridge-utils

# Start and enable libvirt

sudo systemctl start libvirtd

sudo systemctl enable libvirtd

# Check status

sudo systemctl status libvirtd

# Add user to libvirt group

sudo usermod -aG libvirt $USER

Creating VMs with virt-install

Basic VM creation:

# Create Ubuntu VM

virt-install \

--name ubuntu-server \

--memory 4096 \

--vcpus 2 \

--disk size=40,format=qcow2,path=/var/lib/libvirt/images/ubuntu-server.qcow2 \

--cdrom ~/Downloads/ubuntu-22.04.3-live-server-amd64.iso \

--os-variant ubuntu22.04 \

--network bridge=virbr0 \

--graphics vnc,listen=0.0.0.0 \

--noautoconsole

# Connect to VM console

virt-viewer ubuntu-server

# Or use VNC

# VNC port = 5900 + display number (check with: virsh vncdisplay ubuntu-server)

Advanced VM creation:

# VM with specific CPU model and features

virt-install \

--name production-server \

--memory 8192 \

--vcpus 4,maxvcpus=8,cores=2,threads=2,sockets=1 \

--cpu host-passthrough,cache.mode=passthrough \

--disk path=/var/lib/libvirt/images/production.qcow2,size=100,format=qcow2,bus=virtio,cache=writeback \

--disk path=/var/lib/libvirt/images/production-data.qcow2,size=200,format=qcow2,bus=virtio \

--network bridge=br0,model=virtio \

--graphics spice,listen=0.0.0.0,port=5901 \

--video qxl \

--os-variant ubuntu22.04 \

--import

# Import existing disk (skip installation)

virt-install \

--name imported-vm \

--memory 2048 \

--vcpus 1 \

--disk path=/path/to/existing-disk.qcow2,format=qcow2 \

--import \

--os-variant ubuntu22.04 \

--network bridge=virbr0

Managing VMs with virsh

VM lifecycle management:

# List all VMs (virsh man page: https://libvirt.org/manpages/virsh.html)

virsh list --all

# Start VM

virsh start ubuntu-server

# Shutdown VM gracefully

virsh shutdown ubuntu-server

# Force stop VM

virsh destroy ubuntu-server

# Reboot VM

virsh reboot ubuntu-server

# Pause VM

virsh suspend ubuntu-server

# Resume paused VM

virsh resume ubuntu-server

# Auto-start VM on boot

virsh autostart ubuntu-server

virsh autostart ubuntu-server --disable

# Delete VM (keeps disks)

virsh undefine ubuntu-server

# Delete VM and all storage

virsh undefine ubuntu-server --remove-all-storage

VM configuration and information:

# View VM info

virsh dominfo ubuntu-server

# View VM configuration (XML)

virsh dumpxml ubuntu-server > ubuntu-server.xml

# Edit VM configuration

virsh edit ubuntu-server

# View VM console

virsh console ubuntu-server

# View VNC display

virsh vncdisplay ubuntu-server

# Take VM screenshot

virsh screenshot ubuntu-server screenshot.ppm

Resource management:

# View current memory

virsh dominfo ubuntu-server | grep memory

# Set memory (requires VM shutdown)

virsh setmem ubuntu-server 4G --config

# Set maximum memory

virsh setmaxmem ubuntu-server 8G --config

# Change vCPU count (online)

virsh setvcpus ubuntu-server 4 --live

# Set vCPU count permanently

virsh setvcpus ubuntu-server 4 --config

# View CPU stats

virsh cpu-stats ubuntu-server

# View memory stats

virsh dommemstat ubuntu-server

Virtual Networking

Network Architecture

Network modes:

- NAT (Network Address Translation): Default, VMs share host IP

- Bridged: VMs appear on physical network

- Host-only: VMs communicate with host only

- Isolated: VMs communicate with each other only

Configure bridged networking:

# Install bridge utilities

sudo apt install bridge-utils

# Create bridge configuration

sudo vim /etc/netplan/01-netcfg.yaml

# Configuration:

network:

version: 2

ethernets:

eth0:

dhcp4: no

bridges:

br0:

interfaces: [eth0]

dhcp4: yes

# Apply configuration

sudo netplan apply

# Verify bridge

brctl show

ip addr show br0

# Create VM with bridged network

virt-install \

--name bridged-vm \

--memory 2048 \

--vcpus 1 \

--disk size=20 \

--network bridge=br0 \

--os-variant ubuntu22.04 \

--cdrom ubuntu.iso

Manage virtual networks:

# List networks

virsh net-list --all

# View network details

virsh net-info default

virsh net-dumpxml default

# Create new network

cat > isolated-network.xml <<EOF

<network>

<name>isolated</name>

<bridge name='virbr1'/>

<ip address='192.168.100.1' netmask='255.255.255.0'>

<dhcp>

<range start='192.168.100.100' end='192.168.100.200'/>

</dhcp>

</ip>

</network>

EOF

virsh net-define isolated-network.xml

virsh net-start isolated

virsh net-autostart isolated

# Delete network

virsh net-destroy isolated

virsh net-undefine isolated

Virtual Machine Storage Management

Storage Pools

Create and manage storage pools:

# List storage pools

virsh pool-list --all

# View pool details

virsh pool-info default

virsh pool-dumpxml default

# Create directory-based pool

mkdir -p /mnt/vm-storage

virsh pool-define-as vmpool dir --target /mnt/vm-storage

virsh pool-start vmpool

virsh pool-autostart vmpool

# Create LVM-based pool

virsh pool-define-as lvm-pool logical --source-name vg-vms --target /dev/vg-vms

virsh pool-start lvm-pool

# List volumes in pool

virsh vol-list vmpool

# Create volume

virsh vol-create-as vmpool data-disk.qcow2 50G --format qcow2

Attach and detach disks:

# Attach disk to running VM

virsh attach-disk ubuntu-server \

/var/lib/libvirt/images/data.qcow2 \

vdb \

--targetbus virtio \

--persistent

# Detach disk

virsh detach-disk ubuntu-server vdb --persistent

# Attach CD-ROM

virsh attach-disk ubuntu-server \

/path/to/cdrom.iso \

hdc \

--type cdrom \

--mode readonly

# Change CD-ROM media

virsh change-media ubuntu-server hdc /path/to/new.iso

Disk snapshots:

# Create snapshot

virsh snapshot-create-as ubuntu-server \

snapshot1 \

"Pre-upgrade snapshot" \

--disk-only \

--atomic

# List snapshots

virsh snapshot-list ubuntu-server

# View snapshot info

virsh snapshot-info ubuntu-server snapshot1

# Revert to snapshot

virsh snapshot-revert ubuntu-server snapshot1

# Delete snapshot

virsh snapshot-delete ubuntu-server snapshot1

Live Migration

Migration Architecture

Migrate VM between hosts:

# Prerequisites:

# - Shared storage (NFS, iSCSI, etc.)

# - Same libvirt version on both hosts

# - SSH access between hosts

# - Compatible CPU models

# Live migrate VM

virsh migrate --live ubuntu-server \

qemu+ssh://destination-host/system \

--persistent \

--verbose

# Offline migration

virsh migrate ubuntu-server \

qemu+ssh://destination-host/system \

--offline \

--persistent

# Migration with custom parameters

virsh migrate --live ubuntu-server \

qemu+ssh://destination-host/system \

--persistent \

--undefinesource \

--copy-storage-all \

--verbose \

--bandwidth 1000

LXC Containers

Container Architecture

Install LXC:

# Install LXC (LXC documentation: https://linuxcontainers.org/lxc/documentation/)

sudo apt install lxc lxc-templates

# Check LXC configuration

lxc-checkconfig

# List available templates

ls /usr/share/lxc/templates/

Create and manage containers:

# Create Ubuntu container

sudo lxc-create -n container1 -t ubuntu

# Create with specific release

sudo lxc-create -n ubuntu2204 -t ubuntu -- -r jammy

# Create Debian container

sudo lxc-create -n debian-container -t debian

# Create Alpine container (lightweight)

sudo lxc-create -n alpine-container -t alpine

# List containers

sudo lxc-ls -f

# Start container

sudo lxc-start -n container1

# Attach to container console

sudo lxc-attach -n container1

# Execute command in container

sudo lxc-attach -n container1 -- apt update

# Stop container

sudo lxc-stop -n container1

# Destroy container

sudo lxc-destroy -n container1

Container configuration:

# View container config

cat /var/lib/lxc/container1/config

# Edit container config

sudo vim /var/lib/lxc/container1/config

# Example configuration:

lxc.net.0.type = veth

lxc.net.0.link = lxcbr0

lxc.net.0.flags = up

lxc.net.0.hwaddr = 00:16:3e:xx:xx:xx

# Resource limits

lxc.cgroup2.memory.max = 512M

lxc.cgroup2.cpu.max = 50000 100000 # 50% of one CPU

# Clone container

sudo lxc-copy -n container1 -N container2

# Snapshot container

sudo lxc-snapshot -n container1

# List snapshots

sudo lxc-snapshot -n container1 -L

# Restore snapshot

sudo lxc-snapshot -n container1 -r snap0

Production Best Practices

-

Resource Allocation:

- Don't over-commit CPU and memory

- Monitor host resource usage

- Use resource limits (cgroups)

- Plan for peak workloads

-

Storage:

- Use QCOW2 for flexibility, raw for performance

- Implement regular backups

- Use LVM or ZFS for advanced features

- Monitor disk I/O performance

-

Networking:

- Use VirtIO drivers for best performance

- Implement network isolation

- Configure appropriate firewall rules

- Monitor network bandwidth

-

Security:

- Keep hypervisor updated

- Use SELinux or AppArmor

- Isolate management network

- Implement access controls

- Regular security audits

-

High Availability:

- Use shared storage for live migration

- Implement automated failover

- Regular backup and disaster recovery testing

- Monitor VM health

Troubleshooting

KVM not working:

# Check if CPU supports virtualization

egrep -c '(vmx|svm)' /proc/cpuinfo

# Check if KVM modules are loaded

lsmod | grep kvm

# Load KVM modules

sudo modprobe kvm

sudo modprobe kvm_intel # or kvm_amd

# Check nested virtualization

cat /sys/module/kvm_intel/parameters/nested

VM won't start:

# Check VM status

virsh domstate ubuntu-server

# View error logs

virsh dominfo ubuntu-server

sudo journalctl -u libvirtd -f

# Validate XML configuration

virt-xml-validate /etc/libvirt/qemu/ubuntu-server.xml

# Check resource availability

virsh nodeinfo

free -h

df -h /var/lib/libvirt/images/

Network issues:

# Check virtual networks

virsh net-list --all

virsh net-info default

# Restart network

virsh net-destroy default

virsh net-start default

# Check bridge

brctl show

ip addr show virbr0

# Check iptables rules

sudo iptables -L -n -v -t nat

Container issues:

# Check container state

sudo lxc-info -n container1

# View container logs

sudo lxc-console -n container1 -t 0

# Check cgroup configuration

cat /sys/fs/cgroup/lxc/container1/memory.limit_in_bytes

# Verify network

sudo lxc-attach -n container1 -- ip addr

Frequently Asked Questions

Q: What is the difference between KVM and QEMU?

KVM (Kernel-based Virtual Machine) is a Linux kernel module providing hardware virtualization support using Intel VT-x or AMD-V. QEMU is a user-space emulator that uses KVM for acceleration. Together, QEMU/KVM provides full virtualization with near-native performance. KVM handles CPU/memory virtualization, QEMU emulates devices and I/O.

Q: How does libvirt simplify VM management?

Libvirt provides a unified API for managing VMs across hypervisors like KVM, Xen, and VirtualBox. It includes virsh command-line tool, virt-manager GUI, and XML-based configuration. Benefits include consistent management interface, network/storage abstraction, live migration support, and integration with orchestration tools like OpenStack.

Q: What is the difference between VMs and containers?

VMs run complete operating systems with separate kernels using hypervisors, providing strong isolation but higher overhead. Containers share the host kernel, using namespaces and cgroups for isolation with minimal overhead. Use VMs for running different OSes or strong security boundaries, containers for application isolation and rapid deployment.

Q: How do I create a VM with virt-install?

Use virt-install with ISO or network install source: "virt-install --name myvm --memory 2048 --vcpus 2 --disk size=20 --cdrom /path/to/ubuntu.iso --network bridge=virbr0". For network install, use "--location" instead of "--cdrom". Add "--graphics none --console" for headless installation. This creates VM with specified resources.

Q: What are LXC containers and when should I use them?

LXC (Linux Containers) provides OS-level virtualization using kernel cgroups and namespaces. Containers boot like VMs but share host kernel, offering lightweight isolation. Use LXC for running multiple Linux distributions on same kernel, development environments, or application isolation without VM overhead. Docker builds on LXC concepts.

Q: How does CPU pinning improve VM performance?

CPU pinning assigns specific physical CPU cores to VMs, preventing CPU scheduling overhead and cache thrashing. Configure with virsh vcpupin or in domain XML with vcpu placement='static' and cputune sections. Benefits include consistent performance, reduced latency, and better CPU cache utilization for latency-sensitive workloads.

Q: What is live migration and how does it work?

Live migration moves running VMs between hosts without downtime. KVM copies memory pages while VM runs, then pauses briefly to transfer final state. Requires shared storage (NFS/iSCSI), compatible CPU features, and network connectivity. Use virsh migrate for migrations. Essential for maintenance without service interruption.

Conclusion

Linux virtualization provides powerful options for workload isolation and resource management. QEMU/KVM offers full virtualization with hardware acceleration, libvirt provides comprehensive management tools, and LXC delivers lightweight containerization.

Choose appropriate technologies: QEMU/KVM for running different operating systems with full isolation, libvirt for centralized VM management, and LXC for lightweight application containers. Implement proper resource allocation, security measures, backup strategies, and monitoring for production environments.